We explain how to extract keywords using LangChain and ChatGPT. We will create a simple Python script that executes a series of steps:

- Loop through records retrieved by a simple REST API

- Feed the text of each record into ChatGPT for it to extract the relevant keywords

- Parse the ChatGPT response and extract the keywords from it

- Count the keywords

- Sort the keywords by their count and write them into an Excel sheet

Pre-Requisites

We will be using Python 3.10 with LangChain and ChatGPT. For this we created an Anaconda environment with this command:

conda create --name langchain python=3.10And we installed LangChain and ChatGPT using the commands below:

conda install -c conda-forge openai

conda install -c conda-forge langchainYou will also need to generate an API key the OpenAI website: https://platform.openai.com/account/api-keys

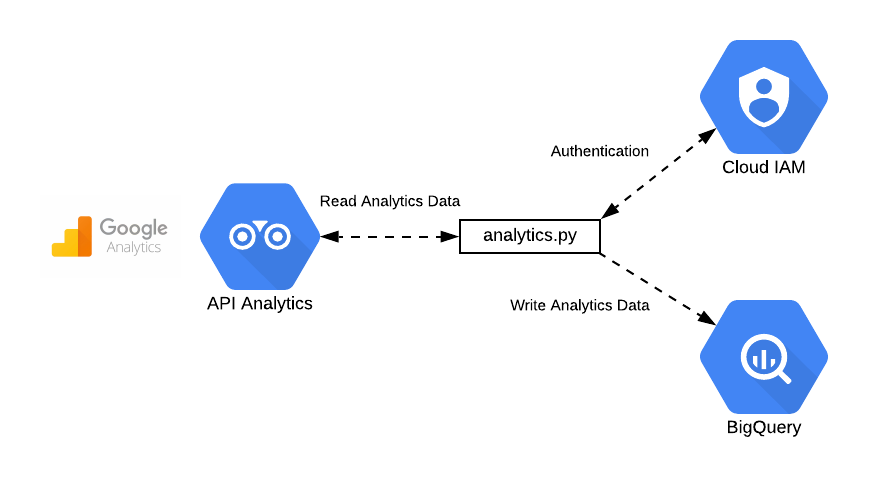

Extracting Data From a REST API

You can use virtually any REST API of your choosing which delivers unstructured text. We have used a REST API of a website we created. This API delivers a text-based title and description.

In order to get the data from the REST API we have used the requests library.

We also decided to use a Python generator to loop through each record retrieved by the REST API.

The relevant script methods are:

def extract_data(json_content):

"""

Extracts the title, descripton, id and youtube id from the json object.

:param json_content: a JSON object with the video metadata

:return: a tuple with the title, descripton, id and youtube id

"""

return (json_content['base']['title'], json_content['base']['description'], json_content['id'], json_content['youtube_id'])

def process_all_records(batch_size: int = 100):

"""

Generator function which loops through all videos via a REST interface.

:param batch_size: The size of the batch retrieved via the REST interface call.

"""

start = 0

while True:

response = requests.get(f"https://admin.thelighthouse.world/videos?_limit={batch_size}&_start={start}")

json_content = response.json()

for jc in json_content:

# Pass the content to the caller using the generator with yield

yield extract_data(jc)

if len(json_content) < batch_size:

break

start += batch_sizeUsing ChatGPT to Extract Keywords

You can give instructions to ChatGPT to extract keywords in natural language. ChatGPT allows multiple messages per input and so we can embed a common instruction and then some variable input. This is the common instruction we used:

You extract the main keywords in the text and extract these into a comma separated list. Please prefix the keywords with ‘Keywords:’

This is the function which implements the interaction with ChatGPT for keyword extraction:

def extract_keywords_from_chat(chat, record_data):

"""

Sends a chat question to ChatGPT and returns its output.

:param chat: The object which communicates under the hood with ChatGPT.

:param record_data: The tuple with the title, description, id and youtube id

"""

dt_single = f"{record_data[0]} {record_data[1]}"

resp = chat([

SystemMessage(content=

"You extract the main keywords in the text and extract these into a comma separated list. Please prefix the keywords with 'Keywords:'"),

HumanMessage(content=dt_single)

])

answer = resp.content

return dt_single,answerAnd indeed ChatGPT answers most of the time in the expected format. Here is an example of ChatGPT’s answers:

Keywords: Golden Heart, Beating, 50th Anniversary, Promo, Promotion video.

Keywords: Yogesh Sharda, meditator, guided meditation, inner freedom, peaceful state, mind, relationships, harmony, personal development trainer.

Processing ChatGPT’s Output

Since the output is well formatted we wrote a simple function with regular expressions that extracts the keywords.

def extract_keywords(text):

"""

Extracts the keywords from the ChatGPT generated text.

:param text: The answer from ChatGPT, like 'Keywords: Golden Heart, Beating, 50th Anniversary, Promo, Promotion video.'

"""

text = text.lower()

expression = r".*keywords:(.+?)$"

if re.search(expression, text):

keywords = re.sub(expression, r"\1", text, flags=re.S)

if keywords is not None and len(keywords) > 0:

return [re.sub(r"\.$", "", k.strip()) for k in keywords.strip().split(',')]

return []Looping Through and Output to Excel

The final part of the script is just about looping through all records and capturing the output in Excel files. We capture the keywords in each records and then count the most popular keywords using a Python `collections Counter`.

Here is the loop function:

def process_keywords():

"""

Instantiates the object which interfaces with ChatGPT and loops through the records

capturing the keywords for each records and also counting the occurrence of each of these keywords.

"""

chat = ChatOpenAI(model_name=model_name, temperature=0)

popular_keywords = Counter()

keyword_data = []

for i, record_data in enumerate(process_all_records()):

try:

dt_single, answer = extract_keywords_from_chat(chat, record_data)

extracted_keywords = extract_keywords(answer)

popular_keywords.update(extracted_keywords)

print(i, dt_single, popular_keywords)

keyword_data.append({'id': record_data[2], 'youtube_id': record_data[3], 'title': record_data[0], 'description': record_data[1],

'keywords': ','.join(extracted_keywords)})

except Exception as e:

print(f"Error occurred: {e}")

write_to_excel(popular_keywords, keyword_data)And the function which captures the record information with corresponding keywords and the overall keyword count in Excel files:

def write_to_excel(popular_keywords, keyword_data):

"""

Captures the keywords of each record in one file and then the overall keyword count in another one.

:param popular_keywords: The keyword counter

:param keyword_data: Contains the record data and the extracted keywords

"""

pd.DataFrame(keyword_data).to_excel('keyword_info.xlsx')

keyword_data = [{'keyword': e[0], 'count': e[1]} for e in popular_keywords.most_common()]

pd.DataFrame(keyword_data).sort_values(by=['count'], ascending=False).to_excel('popular_keywords.xlsx')The full script is published as a Github Gist.

Conclusion

ChatGPT allows multiple messages per input and you can take advantage of this by using the SystemMessage parameter in LangChain. If the instruction given in this SystemMessage is clear you can use ChatGPT to perform specific NLP tasks, like keyword extraction, translation, sentiment analysis, classification, text generation with specific flavours and much more.

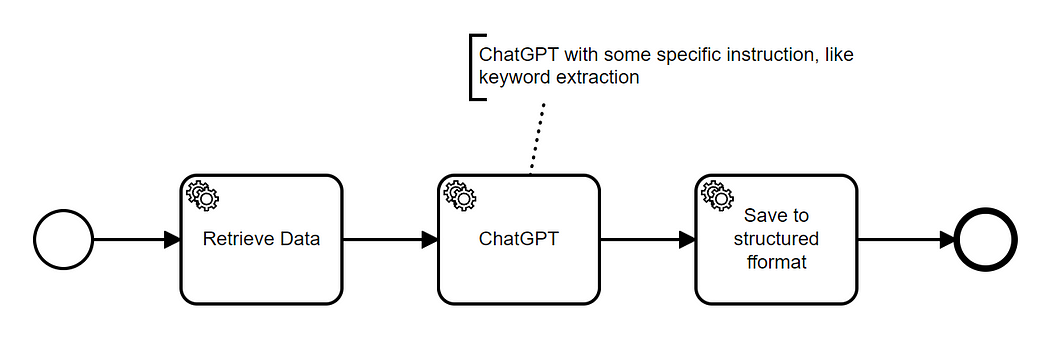

The output can then be stored in a structured format. You could represent this idea with a diagramme like this one: